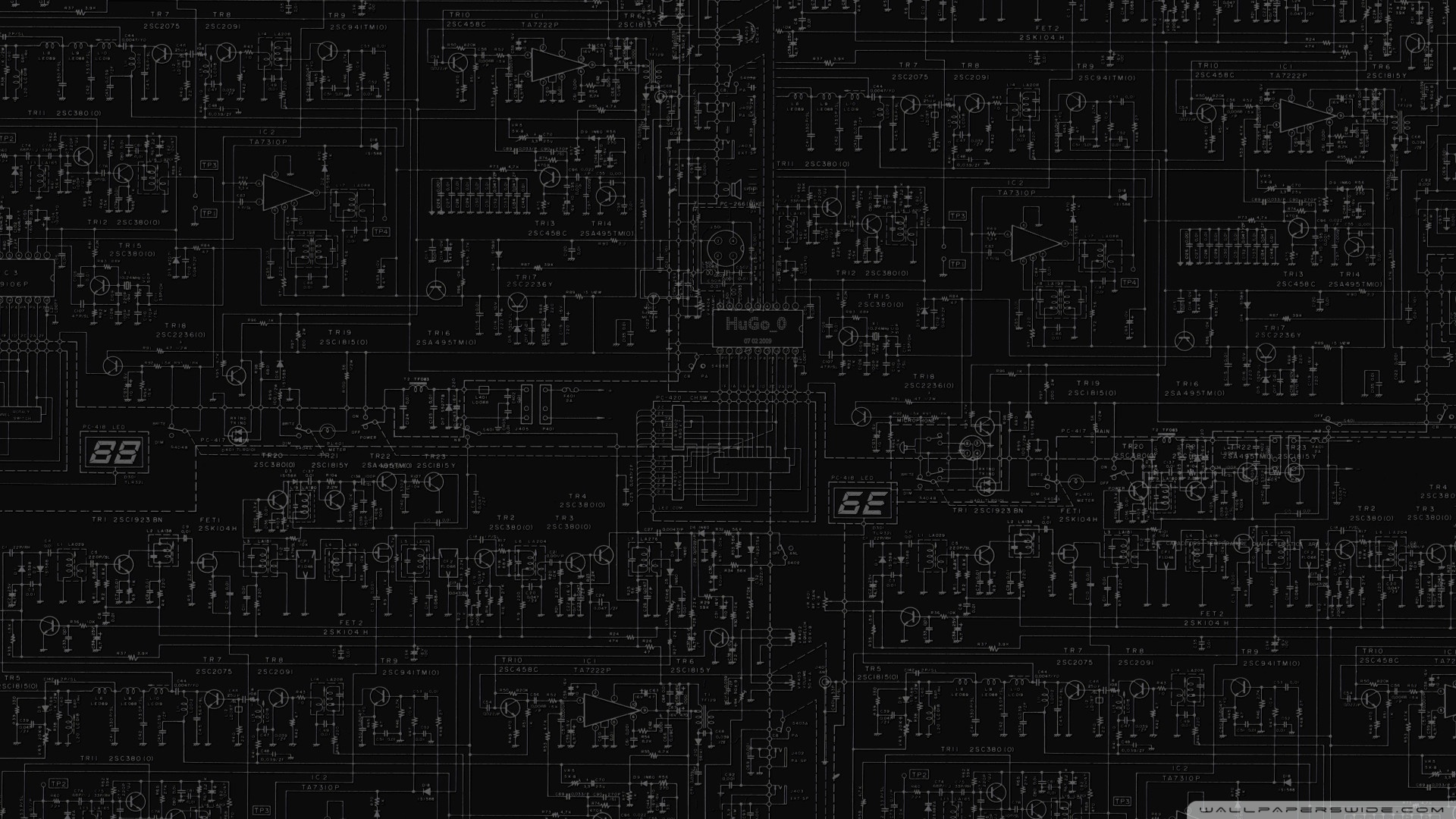

This above. Unfortunately NVIDIA took all the OC headroom out of the silicon and sold it at max clocks.

It's a little better with upcoming GPUs but you're still looking at 100MHz max - and on a GPU that does over 2G stock, 100MHz is 5% which is nothing as that translates to 2 to 3% in game performance if you're lucky (most games now are GPU compute bound which do not scale in the way fixed function operations do)

The best thing you can do is try keep stable clocks in game so for instance, instead of having your clock go from 1999 to 1977MHz~ you can keep it at 1999MHz to max OC.

I've been asking around about this and it's largely due to smaller nodes and the thermal dissipation constraints they impose, as the heat simply can't escape fast enough to yield the typical gains we used to have. It gets worse with every node shrink and 9 to 7nm will be worse. It's precisely why on CPU side we can't do much better than 5GHZ~ which we've had since Sandy Bridge's 32nm node, even though we are at 14nm.

I shouldn't say this as that is what my bread and butter is based upon, but there's literally no difference between GPUs anymore. There's no cooler that will give you even 20MHz more than the others. The difference has moved right up to exotic cooling with DICE or LN2. Other than that, leave your GPU as is and play with memory as there's leg room there for all things greater than FHD as said by

@CRE4MPIE; memory is what you may want to play with.

If you want you can consider different drivers as well as clock limits will differ slightly, but I'd not bother with that honestly as it's not worth the hassle.